Mar 13, 2026

OSCP to OSAI+: How Offensive Security Practitioners Can Pivot Into AI Security

OSCP holders already have the adversarial mindset AI red teaming demands. Learn what transfers, what’s new, and how to close the gap from OSCP to OSAI+ efficiently.

AI is rewriting the rules of cybersecurity on both sides of the engagement, offense and defense, yet most security teams are still sharpening skills built for yesterday’s attack surface. The 2025 ISC2 Cybersecurity Workforce Study found that AI is now a top-five skill requirement in the field, with 41% of respondents calling it critical. Meanwhile, 59% of cybersecurity professionals reported critical or significant skills gaps on their teams. That figure climbed sharply from 44% the prior year. The gap between where the industry is and where it needs to be is widening by the quarter.

Here’s what makes that gap interesting for OSCP holders: you’re not starting from zero. The adversarial mindset, the recon discipline, and the exploit methodology you built through PEN-200 form the foundation of AI red teaming. The distance between OSCP and OSAI+ isn’t a career reset. It’s an extension into new territory: probabilistic systems, LLM attack surfaces, and multi-agent architectures.

This article lays out a practical roadmap for making that pivot. It covers what transfers directly, what’s genuinely new, and how to close the gap efficiently.

The AI attack surface is expanding faster than organizations can secure it. Prompt injection currently holds the number-one spot on the OWASP Top 10 for LLM Applications 2025, with supply chain vulnerabilities at number three. These aren’t theoretical risks. The IBM Cost of a Data Breach Report 2025 revealed that among breached organizations that experienced an AI-related security incident, 97% lacked proper AI access controls. Another 63% had no AI governance policies in place at all.

The talent picture isn’t much better. According to the Fortinet 2025 Global Cybersecurity Skills Gap Report, 97% of organizations are either using or planning to implement AI-enabled cybersecurity solutions. Yet 48% of IT decision-makers say their staff lacks sufficient AI expertise to implement these tools effectively. Organizations are deploying AI at speed while flying blind on the security implications.

The disconnect is stark. Organizations are racing to deploy AI while the people who can adversarially test those systems barely exist yet. Most AI security hires to date have come from ML engineering or data science backgrounds. These are people who understand how models work, but not how attackers think. That’s the wrong end of the problem.

The industry doesn’t just need more people who understand AI models in the abstract. It needs people who think like attackers, people who can probe systems, chain weaknesses, and demonstrate impact under pressure. That’s the OSCP holder’s advantage.

You already know how to approach a black-box system with no documentation and find ways in. You already know how to maintain composure when nothing works for hours and the clock is running. The adversarial mindset doesn’t expire when the attack surface changes. It adapts.

And right now, AI security is the domain most in need of that adaptation.

If you’ve earned your OSCP+, you’ve already built the cognitive infrastructure that AI red teaming demands. The specific targets change, but the methodology translates more directly than you might expect.

Reconnaissance becomes AI system enumeration. Where you once mapped network topologies and service versions, you’ll now enumerate model architectures, data pipelines, API endpoints, and the trust boundaries between AI components. The discipline of thorough enumeration before exploitation? Identical.

Exploitation becomes adversarial input crafting. Traditional injection attacks like SQL, command, and LDAP injection share a conceptual lineage with prompt injection and jailbreaking. You’re still manipulating input to make a system behave in unintended ways. The difference is that AI systems are probabilistic, meaning the same input won’t always produce the same output. That demands a different kind of persistence.

Post-exploitation and pivoting become multi-agent chain exploitation. In a network engagement, you pivot from one compromised host to another. In an AI environment, you chain compromised agents, poison RAG retrieval pipelines for lateral access, and escalate through interconnected AI components. The logic of privilege escalation and lateral movement still applies. The topology is just different.

Methodology under pressure carries over directly. The OSAI+ exam is a 24-hour proctored engagement, mirroring the endurance format OSCP holders already know. When you’re 30 hours into an assessment and stuck, the “Try Harder” problem-solving approach isn’t optional. It’s the only thing that works. That resilience is non-negotiable in AI security, where attack surfaces are novel, documentation is sparse, and there’s no playbook to follow.

Report writing becomes AI risk communication. Translating technical findings into actionable defensive recommendations is a skill you’ve already practiced. AI red teaming demands the same, with an added layer. You need to communicate risk in systems where the behavior is inherently uncertain and the failure modes aren’t always intuitive to stakeholders.

The core message is this: the adversarial mindset you developed through OSCP doesn’t just help with AI security. It’s the prerequisite that most newcomers to the field are missing.

Honesty matters here. OSCP alone doesn’t prepare you for everything AI red teaming requires. There are domains where the knowledge is fundamentally new, and acknowledging that is the first step to closing the gap efficiently.

How LLMs work, at a level sufficient to find weaknesses. You don’t need to build models from scratch, but you do need to understand tokenization, attention mechanisms, and embeddings well enough to identify where influence can be injected. Think of it like understanding TCP/IP to exploit network services. You need the conceptual foundation, not a PhD.

Probabilistic versus deterministic systems. This is the single biggest mental shift. In traditional pentesting, you run an exploit and it either works or it doesn’t. In AI systems, the same input can produce different outputs on consecutive runs. This changes how you validate findings, reproduce issues, and communicate confidence levels in your reports.

RAG architecture attack surfaces. Retrieval-Augmented Generation has become the dominant deployment pattern for enterprise AI, connecting LLMs to internal knowledge bases, document stores, and live data feeds. This architecture creates a rich attack surface that didn’t exist a few years ago. Offensive practitioners need to understand data poisoning within retrieval databases, manipulation of retrieval ranking to surface malicious content, context window exploitation, and how compromised retrieval can cascade into model misbehavior. RAG poisoning for lateral access, which involves injecting content into a retrieval source that alters the model’s behavior downstream, is one of the most consequential new techniques in the AI red teamer’s toolkit.

Multi-agent system exploitation. Organizations are deploying AI agents that coordinate with each other by sharing context, delegating tasks, and making autonomous decisions. This multiplies the attack surface. Chaining compromised agents for lateral movement is an emerging offensive technique that has no direct analog in traditional network pentesting. When one agent can instruct another to take actions or retrieve data, compromising the first can cascade across an entire agent ecosystem. Understanding the trust relationships between agents is the AI-era equivalent of mapping Active Directory permissions.

AI supply chain security. Model provenance, dependency risks, and training data integrity are critical attack vectors. The OWASP Top 10 for LLMs ranks supply chain vulnerabilities at number three for a reason: organizations frequently deploy third-party models without adequate verification of what’s inside.

Frameworks and taxonomies. The OWASP Top 10 for LLMs, MITRE ATLAS, and the NIST AI Risk Management Framework provide the shared vocabulary for AI security work, much like MITRE ATT&CK does for traditional offensive operations. Familiarity with these frameworks is essential for both execution and communication.

For a deeper look at these evolving skill requirements, OffSec’s breakdown of offensive AI security skills for 2026 is a useful companion to this article.

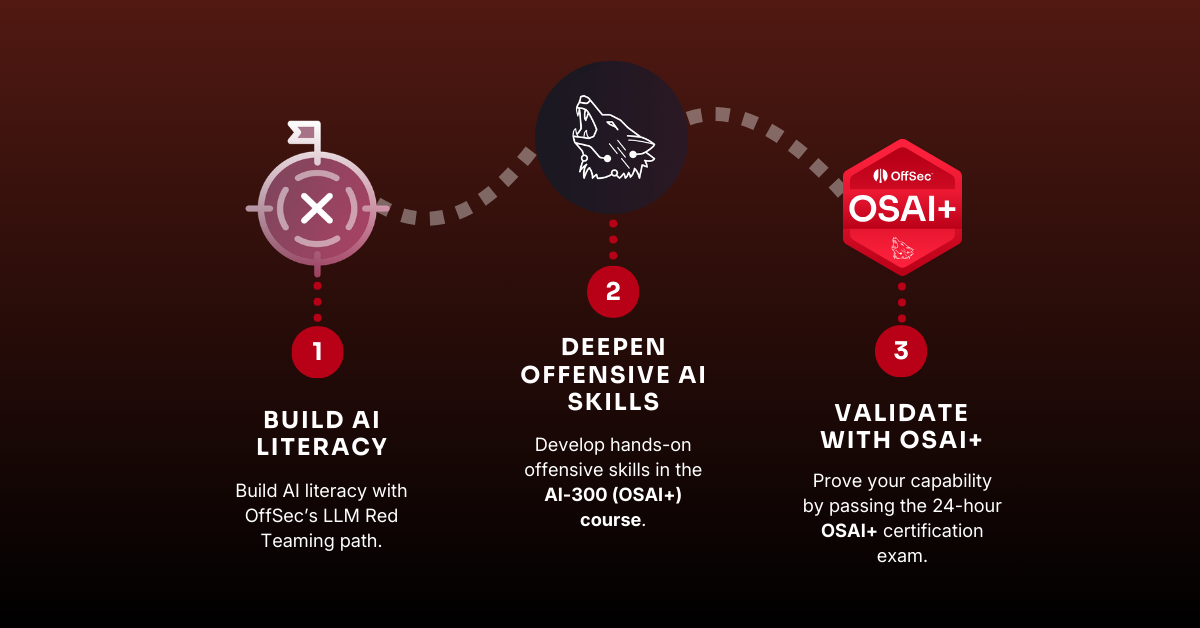

The path from OSCP to OSAI+ isn’t a rigid timeline. It’s a logical progression that respects what you already know while building what you don’t. Here’s how to structure it.

Start with OffSec’s LLM Red Teaming learning path. At roughly 30 hours across six modules, it covers LLM fundamentals, prompt injection techniques, jailbreaking methodologies, and supply chain attack vectors. This is your orientation phase, designed to translate your existing offensive instincts into the AI domain without assuming prior machine learning experience.

Move into the AI-300 (OSAI+) course content. This is where OffSec’s hands-on methodology meets the messy reality of production AI and ML deployments. The labs mirror real-world environments, not sanitized textbook scenarios, and cover recon, exploitation, and post-exploitation across AI-enabled systems. If OSCP taught you to think like an attacker against networks, this phase teaches you to think like an attacker against AI systems.

The OSAI+ certification is a 24-hour proctored exam focused on reconnaissance, exploitation, and post-exploitation in AI environments. It’s open-book, with the exception of AI chatbots and LLMs with direct prompt access, and follows the same endurance format OSCP holders already respect. This isn’t a multiple-choice recall test. It validates real adversarial capability against AI systems.

Pre-release pricing is currently available: the Course & Cert Bundle offers 25% savings with 120 days of lab access and one exam attempt at the current rate. Access begins upon course release, targeted for March 31, 2026.

And from March 31, 2026, OSAI+ can be purchased with Learn One!

OSAI+ is built for the operator, not the auditor. Where other certifications may emphasize breadth-first coverage of AI governance, policy, and defensive architecture, OSAI+ is designed to validate that you can actually find and exploit weaknesses in AI-enabled systems under realistic conditions. The 24-hour exam isn’t testing recall or framework memorization. It’s testing whether you can sustain an offensive engagement against novel AI attack surfaces, adapt when things don’t work as expected, and document your findings in a way that drives defensive action.

This is the same philosophy that made OSCP the industry’s most respected offensive certification: methodology over memorization, hands-on labs over slide decks, proctored performance assessment over multiple-choice recall. OSAI+ carries that DNA forward into a domain where it arguably matters even more, because AI attack surfaces are less documented, less predictable, and changing faster than any training material can keep pace with on its own.

This distinction matters because the AI security skills gap isn’t going to be closed by theoretical knowledge alone. The Fortinet 2025 Skills Gap Report found that while 97% of organizations are deploying or planning AI-enabled security tools, nearly half say their teams lack the hands-on AI expertise to use those tools effectively. Certifications that validate practice, not just theory, are what close that gap.

The “Try Harder” methodology is uniquely suited to AI’s unpredictable attack surfaces. In traditional pentesting, there’s usually a known exploit path, and your job is to find it. In AI red teaming, the attack surface is less documented, the system behavior is non-deterministic, and you often have to reason your way through novel problems with limited guidance. That’s exactly the cognitive muscle the OSCP experience was designed to build. OSAI+ extends it into the AI domain.

For teams evaluating how to build AI-ready cybersecurity capabilities, OSAI+ provides a credible, performance-validated signal that a practitioner can operate, not just advise, in this space.

OSAI+ does not require prior machine learning experience. The course teaches AI concepts from an offensive security perspective, focusing on adversarial fluency rather than model engineering. Basic familiarity with LLMs and some exposure to LLM pentesting methodology will help learners get the most from the material.

OSCP is not a formal prerequisite for OSAI+. However, OSAI+ is an advanced-level course (AI-300) designed for experienced cybersecurity practitioners with solid offensive fundamentals. OSCP+ holders are well-positioned because the recon discipline, exploitation methodology, and endurance exam format translate directly.

The transition typically requires 50 to 100 hours of study. A realistic path starts with the LLM Red Teaming learning path at roughly 30 hours, followed by the AI-300 course content and lab time. Most working professionals can complete this progression in 6 to 12 weeks.

The OSAI+ exam is a 24-hour proctored engagement. Candidates perform reconnaissance, exploitation, and post-exploitation across AI-enabled target environments. The exam is open-book, except for AI chatbots, and requires candidates to produce a professional report documenting their findings.

The pre-release Course & Cert Bundle offers 25% savings from launch pricing with an additional 30 days of lab access (120 days for the price of 90) and includes one exam attempt. OSAI+ is also available through Learn One and Learn Enterprise subscriptions from March 31, 2026. Visit the OSAI+ course page for current pricing details.

OSAI+ prepares practitioners for roles including AI red teamer, AI penetration tester, offensive-focused AI security engineer, and senior security consultant. Organizations are scaling AI deployments rapidly, and demand for professionals who can adversarially test these systems is outpacing supply.

AI is changing what penetration testers need to know, not eliminating the role. Automated tools handle repetitive scanning tasks, but adversarial thinking remains a fundamentally human capability. Pentesters who develop offensive AI security skills will become more valuable as AI adoption accelerates across organizations.

Latest from OffSec

Career Advice

8 Ways to Stay Motivated During Exam Prep

Preparing for an OffSec certification exam is a technical and psychological journey. Here are some expert strategies to help during your OffSec exam prep!

Mar 16, 2026

4 min read

AI

OSCP to OSAI+: How Offensive Security Practitioners Can Pivot Into AI Security

OSCP holders already have the adversarial mindset AI red teaming demands. Learn what transfers, what’s new, and how to close the gap from OSCP to OSAI+ efficiently.

Mar 13, 2026

11 min read

AI

The AI Security Skills Gap: What It Is, Where It Exists, and How to Close It

The AI security skills gap threatens enterprise AI investments. Learn where skills gaps exist across security teams and how hands-on training closes them.

Mar 10, 2026

9 min read